Knowledge of image manipulation and “fakes” must be gathered through play

Danish children and youth play with manipulation of images and videos every single day. The opportunities get more wild and fun, but unfortunately they can also end up with violations. As adults, we must make sure we play WITH the children while giving them the necessary reflections.

As adults, we must understand that picture manipulation gets both easier, more fun and potentially more worrying, the better the technology on the youths’ platforms – such as Snapchat and TikTok – becomes.

At the recently held seminar, “From adorable deer eyes to fake nudes”, that we at CfDP co-hosted (along with the Media Council for Children and Young People and Save the Children), a world of memes, cheapfakes – fake nude pictures – was opened. This theme was chosen because children and youths’ visual communications has exploded over the recent years – but the theme was also chosen because the number of cases involving edited nude images has doubled in 2019. Save the Children’s study from 2018 also found that almost half of the young people between 13 and 17 years of age have witnessed peers edit photos of someone in the intention of making them more sexual.

“As long as something is clearly fake, everybody can laugh at it. But the technology is about to become so superior that the doubt can occur.” –

Jonas Ravn, senior counselor at CfDP.

Chipmunks on crack are funny

When children and youth effortlessly use incorporated picture-manipulation features, in for instance TikTok or Snapchat, it is primarily due to the simplicity of these features. It is easy and fun to face swap and change the voice, so that one sounds like a chipmunk on crack. The fun play with media drives it forward.

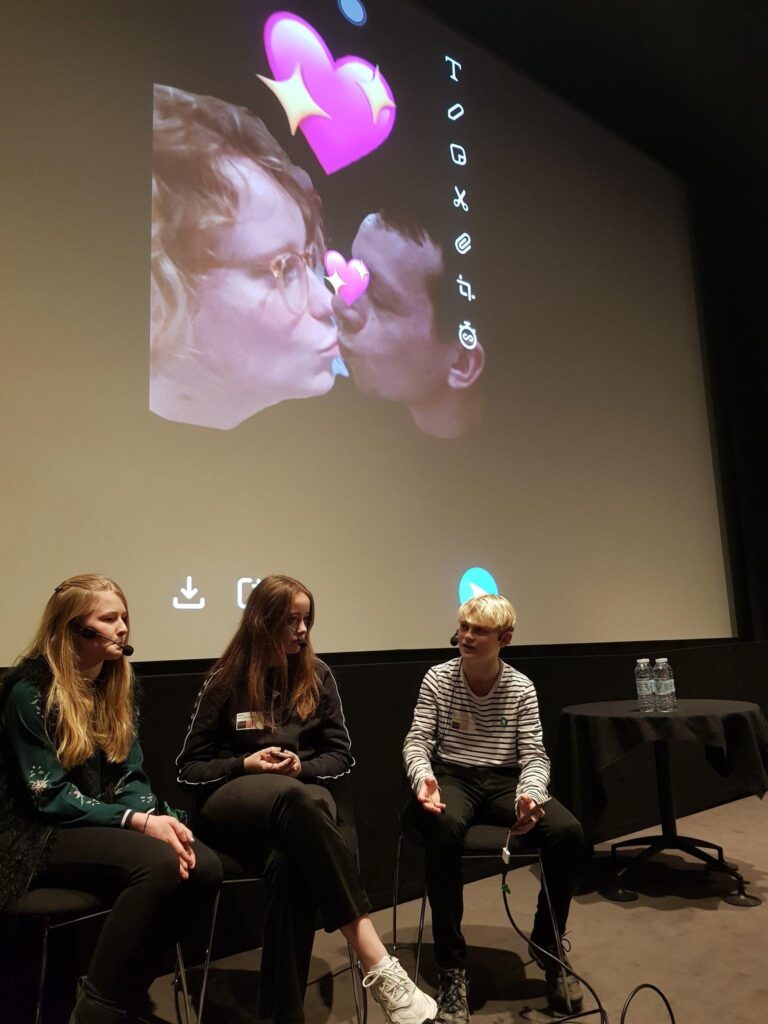

At the seminar in January, we were shown by the youth themselves that it only takes 30 seconds to manipulate an image that causes two “innocent” people to kiss each other. It is fun and quite obvious that you are just having fun. At the same time it is quite easy to see that the result is fake. As long as something is clearly fake, everybody can laugh at it. But the technology is about to become so superior that doubt can occur.

What can we count on?

Popularly, the most simple form of image and video manipulation is called “cheapfakes.” That is, the slightly-bought manipulation that is an incorporated part in several social media apps today. This is when we swap faces on Snapchat or slow down the speed of a video to make the victim sound tipsy. That kind of simple video manipulation is growing into the “deepfake” department, which normally has been reserved for people with more advanced and expensive AI-savvy editing equipment.

The fun and innocent suddenly becomes a tool and a channel for far greater challenges. In January, the American outlet Techcrunch revealed that a unpublished code had been found in TikTok. The company ByteDance had deployed this code for the purpose of making biometric scanning of the face so that it can be seamlessly inserted into videos.

This is where the concerns began to arise. Both in addition to what a Chinese company wants with our biometric data, but also in light of the fact that it is getting harder and harder to sort out the real from the manipulated. Worst case scenario, this means that children and the youth becomes victims of violations – e.g. their profile picture is put on a naked body through manipulation. That kind of manipulation is illegal.

We have to think carefully

Should we ban? Should we create awareness of how dangerous the technology is? Or should we play with the kids and make sure (and hope) that play simultaneously provides enough media knowledge to figure out when to suspect something is fake?

At least the latter is important. It is well known that the most effective digital education happens when the adult and the kids/the youth use media together. It is talking while playing Fortnite together. It is talking about the latest influencer video on YouTube, or while trying to manipulate a selfie with the Facetune app.

There is no doubt that subjects as picture manipulation, deepfakes and associated potential violations will become a focus area for us at CfDP now and in the future. We continuously monitor the technological and ethical developments in the field and we look forward to discuss picture culture with children, young people and professionals.

You can learn more about Safe Internet Day in the video here and at https://www.saferinternetday.org/:

Hvis du vil sætte et par ord på din feedback, vil det hjælpe os rigtig meget til at forbedre vores indhold.